Introduction: AI Governance

AI is changing our world fast. From helping doctors to making cities smarter, it’s amazing. But AI also brings big challenges: bias, privacy worries, and figuring out who’s responsible when things go wrong. These problems show we need strong AI governance models.

Effective AI governance models aren’t just for the future; they’re vital now. Organizations need them to use AI’s power safely. In the U.S. and globally, the big question isn’t if we need governance, but how. This guide will break down AI governance, explore different AI governance models, and show how to put them into practice.

What Are AI Governance Models?

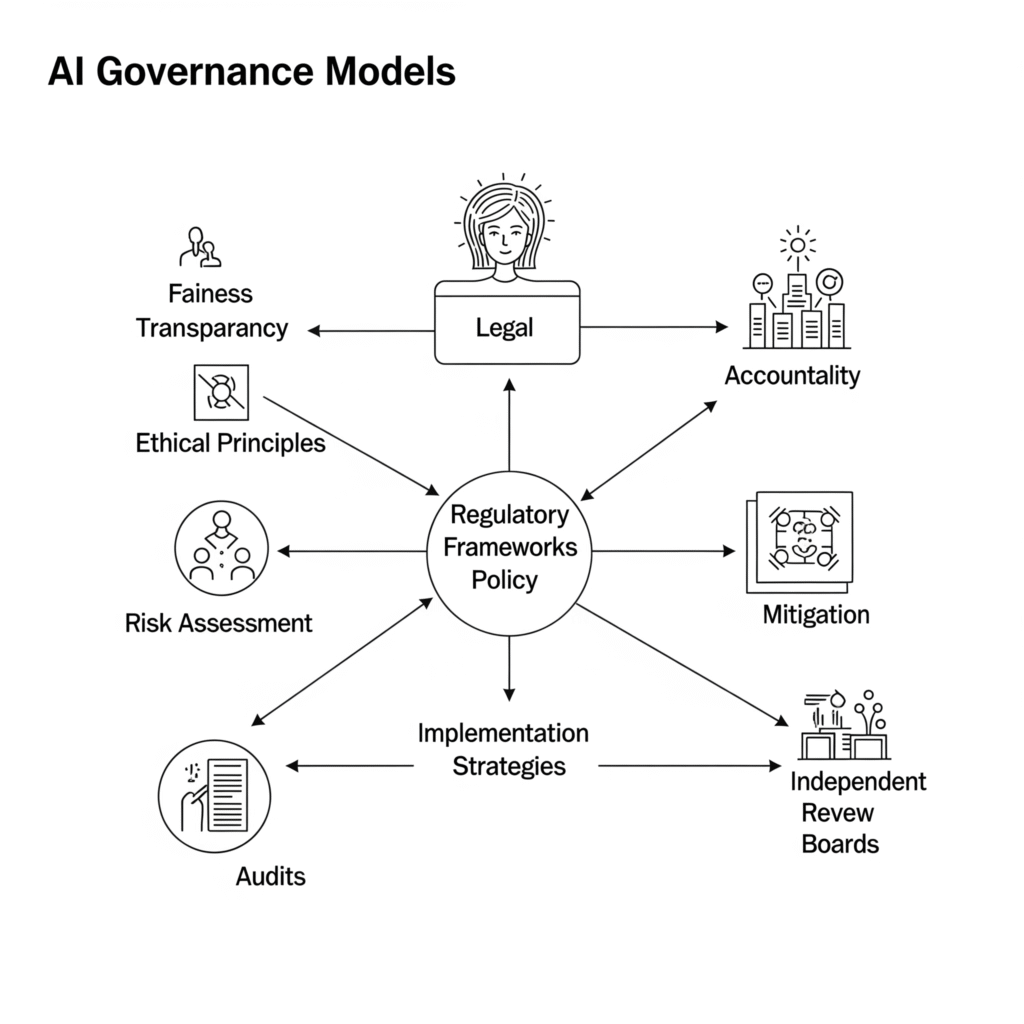

AI governance is a system of rules and frameworks. It makes sure AI is built and used ethically, responsibly, and legally. It’s about building trust and accountability through the whole AI journey. Think of it as setting the safety rules for AI innovation.

While data governance handles data, AI governance models deal with AI’s unique issues:

- Algorithmic Bias: AI can pick up and spread existing unfairness from its training data.

- Explainability: It’s hard to understand why complex AI systems make certain decisions.

- Accountability: Who takes the blame if an AI system causes harm?

- Emergent Behavior: AI can act in unexpected ways in real situations.

To handle these, AI governance models focus on key areas:

- Transparency: Showing how AI works.

- Fairness: Making sure AI doesn’t discriminate.

- Accountability: Clear roles for who’s responsible.

- Privacy: Protecting user data.

- Safety: Ensuring AI is reliable and harmless.

- Ethics: Aligning AI with human values.

These points are the foundation for good models. They help ensure AI serves us well.

Different AI Governance Models in Practice

There are many types of AI governance models. They range from broad ideas to strict laws and industry rules. Knowing these helps any organization set up its own governance plan.

1. Principles-Based Guidance: The Moral Compass for AI Governance Models

Many governance models start with principles. These are high-level rules for ethical AI. They guide AI development without strict commands.

- OECD AI Principles: Over 40 countries, including the U.S., follow these. They promote “trustworthy AI” focusing on human values, transparency, and accountability. They shape national AI strategies.

- EU Ethics Guidelines: These set the stage for the EU AI Act. They cover human oversight, data privacy, and ethical concerns.

Pros: Flexible, adaptable, and build agreement on ethics.

Cons: Not legally binding, can be vague, and might lack real impact without stronger support.

2. Regulatory and Legal Frameworks: Law Behind AI Governance Models

Governments are turning AI governance models into laws. These laws bring mandatory rules and penalties. They create a more standard and enforceable environment for AI.

- The EU AI Act: This is a major global AI law. It sorts AI by risk level (e.g., high-risk, low-risk). It sets strict rules for high-risk AI, like those in critical infrastructure. U.S. companies working with the EU might need to follow these rules.

- GDPR: Not just for AI, but this data privacy law affects how AI uses personal data, especially for U.S. companies with EU users.

- U.S. Federal and State Initiatives:

- NIST AI Risk Management Framework (AI RMF): This voluntary guide from the U.S. National Institute of Standards and Technology helps organizations manage AI risks. It’s a top example of AI governance models for U.S. businesses.

- Executive Order on AI (2023): This U.S. order sets new AI safety, privacy, and ethics standards. It shows a strong federal commitment to AI governance models.

- State-Level Regulations:

- New York City AI Law (2023): Prevents bias in AI tools used for hiring.

- California AI Transparency Act (2026): Requires disclosure for AI tools with many users. These show a growing, though varied, legal landscape for AI governance models in the U.S.

Pros: Legal certainty, builds public trust, and encourages widespread responsible practices.

Cons: Can be slow to update, might slow innovation, and varied state laws create complexity.

3. Industry Self-Governance & Standards: Proactive AI Governance Models

Many organizations create their own models. These include internal policies, codes of conduct, and technical standards. This approach is flexible and fits specific business needs.

- Company AI Ethics Boards: Companies like Google and Microsoft have internal groups to guide ethical AI. These are key parts of their internal AI governance models. For example, IBM works with clients like American Airlines to build ethical AI solutions.

- ISO Standards for AI: The International Standards Organization (ISO) creates standards like ISO/IEC 42001. These guide building and improving AI management systems.

- Industry Alliances: Groups like the Partnership on AI bring together experts to set best practices for responsible AI.

Pros: Agile, practical, tailored, and builds a culture of responsibility.

Cons: Voluntary, can lead to “ethics washing” (seeming ethical without real commitment), and lacks outside oversight.

Implementing AI Governance: Your Roadmap

Effective AI governance models aren’t just documents; they’re active systems. For U.S. businesses, this means being strategic and proactive.

- Get Leadership Buy-in: Top leaders must support AI governance. They need to set clear goals aligned with company values.

- Form a Cross-Functional Team: AI affects everyone. Build a committee with legal, IT, data science, and business leaders. This ensures a broad view of AI governance models.

- Prioritize Data Governance: Good data is key. Ensure data is collected ethically, is accurate, and secure.

- Track data lineage.

- Use privacy-preserving methods.

- Regularly check for data bias.

- Integrate Governance Throughout AI’s Life: Embed rules at every stage.

- Design: Do AI Impact Assessments (AIAs) early.

- Development: Test for bias and safety.

- Deployment: Ensure human oversight.

- Monitoring: Continuously track performance.

- Retirement: Safely remove old AI systems.

- Monitor and Audit Continuously: Keep an eye on AI systems. Use tools to check for bias and compliance. Independent audits are vital for robust AI governance models. A leading U.S. bank, for example, used real-time monitoring in its credit card system, a success story for strong AI governance models.

- Be Transparent and Document Everything:

- Create “model cards” with AI system details.

- Clearly explain AI to users.

- Build an AI-Responsible Culture: Train employees on AI ethics and policies. Reward responsible AI practices.

Real-World Stakes: The Impact of AI Governance Models

Poor AI governance models can lead to big problems:

- Amazon’s Biased Hiring Tool (2018): Amazon had to scrap an AI hiring tool. It unfairly favored male candidates because it learned from historical, biased data. This shows how weak AI governance models can cause discrimination.

- IBM Watson for Oncology (2018): This AI gave unsafe cancer treatment advice. It lacked enough real patient data and validation. A clear failure of AI governance models in healthcare.

- COMPAS Algorithm: This U.S. justice system tool was accused of racial bias. Its lack of transparency made it hard to verify fairness in its AI governance models.

However, strong AI governance models bring big benefits:

The global AI market is booming, projected to reach USD 1,811.75 billion by 2030 from USD 279.22 billion in 2024. This growth makes good AI governance models even more crucial.

A KPMG study (2025) found a gap: 44% of U.S. workers use unauthorized AI, and 46% upload sensitive data to public AI. Yet, only 54% believe their company has AI policies. This shows a huge need for better AI governance models.

Effective AI governance models help:

- Build Trust: While only 41% of U.S. people trust AI generally, 72% trust healthcare providers with it. Good AI governance models can bridge this gap.

- Reduce Risks: It prevents costly lawsuits and fines. The city of Amarillo, Texas, built public trust by developing a transparent AI assistant, a strong example of public sector AI governance models.

- Boost Responsible Innovation: Clear rules let companies innovate with confidence. PwC, for instance, is helping clients develop AI governance models while building effective AI products.

The Road Ahead: Challenges and Evolving AI Governance Models

AI governance is new and changing fast. Key challenges include:

- Speed of AI vs. Law: AI moves faster than laws can adapt. This needs flexible AI governance models.

- Global Harmony: Different rules worldwide make consistent models hard for global companies.

- Complex AI: It’s tough to explain how advanced AI makes decisions, affecting transparency in AI governance models.

- Generative AI: New issues like deepfakes and misinformation require fresh AI governance models.

Despite these, trends show promise:

- AI for Governance: Using AI to automate compliance and risk checks.

- Focus on High-Risk AI: Stricter rules for AI in critical areas like finance.

- Accountability for AI Content: New laws like the U.S. TAKE IT DOWN Act (2025) address AI-generated harmful content.

- Human Oversight: Keeping humans involved in AI decisions.

- Industry-Specific Governance: Tailored AI governance models for sectors like healthcare.

Conclusion: Building Trust in the AI Era with Strong AI Governance Models

AI has huge potential. But to use it well, we must govern it responsibly. Strong AI governance models aren’t just about rules; they’re about building trust, cutting risks, and driving innovation.

For organizations everywhere, especially in the U.S., embracing AI governance models is essential. By using solid frameworks, being open, and fostering a responsible AI culture, we can confidently navigate AI’s future. The ongoing evolution of AI governance models will be key to unlocking AI’s true benefits.

What aspect of AI governance do you find most challenging or most critical for the future? Share your thoughts in the comments below!

Sources

This blog post uses information from several trusted sources to explain AI governance models. Global AI market forecasts come from Grand View Research and NextMSC. Data on U.S. worker AI use, company policies, and public trust is from the KPMG “Trust, Attitudes and Use of Artificial Intelligence: A Global Study 2025.”

Examples of AI failures, like Amazon’s hiring tool, are widely reported in tech news, including analysis from IMD Business School. Concerns about IBM Watson for Oncology are discussed by The ASCO Post. The bias of the COMPAS algorithm is detailed by ProPublica. Information on the NIST AI Risk Management Framework is directly from NIST. Details on U.S. state laws, including the New York City AI Law and California AI Transparency Act, and the federal TAKE IT DOWN Act, are from legal firms like K&L Gates and Orrick, and official government sources. The City of Amarillo, Texas project was found in local news. Insights into PwC’s AI governance work are from their official website.

Disclaimer

This blog post provides general information only. It’s not legal, financial, or professional advice. While we aim for accuracy, AI and its governance are constantly changing. Please consult qualified professionals for specific advice about AI governance models or compliance needs. DigitalAiLiens don’t guarantee the completeness, accuracy, or reliability of this information. Any reliance on it is at your own risk.